Authors: Tsendsuren Munkhdalai, Manaal Faruqui, Siddharth Gopal

Published on: April 10, 2024

Impact Score: 7.4

Arxiv code: Arxiv:2404.07143

Summary

- What is new: Introduces Infini-attention for scaling LLMs to infinitely long inputs with efficient memory and computation use.

- Why this is important: Scaling Transformer-based LLMs to handle infinitely long inputs with limited memory and computational resources.

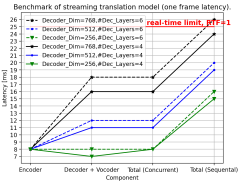

- What the research proposes: A novel attention mechanism, Infini-attention, that integrates compressive memory with masked local and long-term linear attention within a single Transformer block.

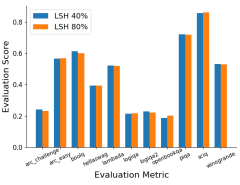

- Results: Effective handling of long-context benchmarks, achieving successful results on 1M and 500K length tasks with 1B and 8B parameter models.

Technical Details

Technological frameworks used: Transformer-based Large Language Models

Models used: 1B and 8B LLMs

Data used: Long-context language modeling benchmarks, passkey context block retrieval, and book summarization tasks

Potential Impact

Content creation, data analysis, and information retrieval sectors, including companies specializing in AI-driven text analysis, summarization, and long-text processing.

Want to implement this idea in a business?

We have generated a startup concept here: InfiniText.

Leave a Reply