Authors: Ziying Song, Lei Yang, Shaoqing Xu, Lin Liu, Dongyang Xu, Caiyan Jia, Feiyang Jia, Li Wang

Published on: March 18, 2024

Impact Score: 7.2

Arxiv code: Arxiv:2403.11848

Summary

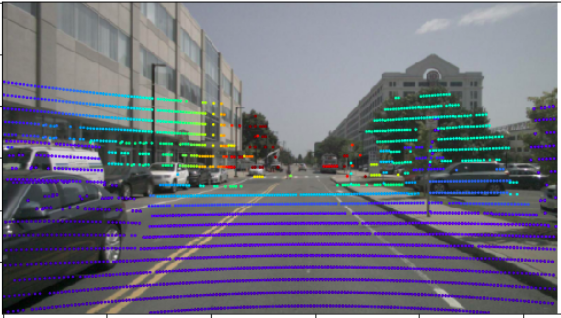

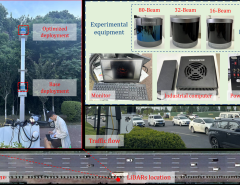

- What is new: Introduces Graph BEV, a robust fusion framework that uses Graph matching to align LiDAR and camera data more accurately for 3D object detection.

- Why this is important: Existing methods for integrating LiDAR and camera data into 3D object detection struggle with inaccuracies due to misaligned sensors, affecting depth estimation and feature alignment.

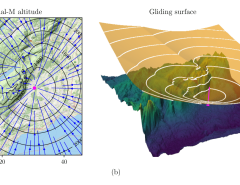

- What the research proposes: Graph BEV framework with a Local Align module for neighbor-aware depth features and a Global Align module for correcting misalignments between LiDAR and camera BEV features.

- Results: Achieved state-of-the-art performance with an mAP of 70.1%, surpassing previous methods and showing significant improvement under conditions with alignment noise.

Technical Details

Technological frameworks used: Graph BEV

Models used: Local Align module with Graph matching, Global Align module

Data used: nuscenes validation set

Potential Impact

Automotive industry, specifically companies focused on autonomous driving technologies and advanced driver-assistance systems (ADAS).

Want to implement this idea in a business?

We have generated a startup concept here: VisionSyncTech.

Leave a Reply