Authors: Al Amin, Kamrul Hasan, Saleh Zein-Sabatto, Deo Chimba, Imtiaz Ahmed, Tariqul Islam

Published on: March 07, 2024

Impact Score: 8.0

Arxiv code: Arxiv:2403.04130

Summary

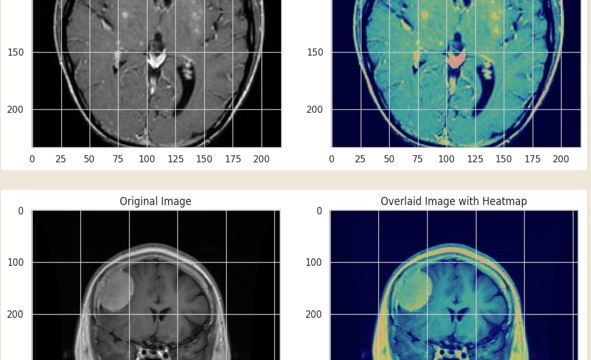

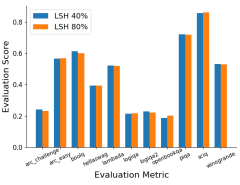

- What is new: Leveraging a custom XAI framework with techniques like LIME, SHAP, and Grad-Cam in the AIoMT domain for improving healthcare systems, particularly in transparent and interpretative decision-making in medical applications.

- Why this is important: The complexity of AI models in healthcare and the crucial need for transparent, interpretable decision-making.

- What the research proposes: A custom Explainable Artificial Intelligence (XAI) framework designed for AIoMT, utilizing ensemble-based DL methodologies for accurate and trustworthy medical diagnoses.

- Results: High precision, recall, and F1 scores with 99% training accuracy and 98% validation accuracy in brain tumor detection, demonstrating the framework’s effectiveness in making precise and reliable diagnoses.

Technical Details

Technological frameworks used: Custom XAI

Models used: LIME, SHAP, Grad-Cam, multiple CNNs with a majority voting technique

Data used: AIoMT for brain tumor detection

Potential Impact

Healthcare industry, particularly companies and markets involved in medical diagnostics and AI solutions for healthcare.

Want to implement this idea in a business?

We have generated a startup concept here: ClearDiagnose.

Leave a Reply