EdgeIntelli

Elevator Pitch: EdgeIntelli brings the future of AI into the present by deploying next-gen large language models at the network’s edge with 6G, enhancing speed, privacy, and accessibility of AI across industries.

Concept

Deploying large language models (LLMs) with 6G mobile edge computing (MEC) to improve AI responsiveness, data privacy, and reduce bandwidth costs in sectors like healthcare and robotics.

Objective

To revolutionize AI deployment by leveraging the power of 6G MEC for LLMs, offering low-latency, privacy-enhanced, and cost-efficient AI applications.

Solution

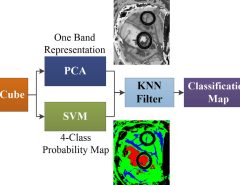

EdgeIntelli utilizes cutting-edge techniques like split learning, parameter-efficient tuning, quantization, and parameter sharing to deploy efficient LLMs at the edge.

Revenue Model

Subscription-based for enterprises, pay-as-you-go for SMEs, and customization services for specific industry applications.

Target Market

Healthcare providers, robotics manufacturers, tech companies seeking AI solutions, and enterprises requiring edge computing AI applications.

Expansion Plan

Start with healthcare and robotics sectors, then expand to other industries such as finance, retail, and manufacturing with tailored AI solutions.

Potential Challenges

Technical limitations of edge devices, ensuring data privacy and security, developing scalable and robust edge AI applications.

Customer Problem

The need for faster, more reliable, and privacy-compliant AI applications without incurring high bandwidth costs.

Regulatory and Ethical Issues

Complying with data protection regulations (GDPR, HIPAA), ethical use of AI, ensuring transparency and accountability in AI decisions.

Disruptiveness

Shifts AI deployment from cloud-centric to edge-centric models, drastically reducing response times and fostering growth in real-time AI applications.

Check out our related research summary: here.

Leave a Reply