Authors: Dan Zhao, Siddharth Samsi, Joseph McDonald, Baolin Li, David Bestor, Michael Jones, Devesh Tiwari, Vijay Gadepally

Published on: February 25, 2024

Impact Score: 8.2

Arxiv code: Arxiv:2402.18593

Summary

- What is new: First detailed analysis of the effects of GPU power-capping at the supercomputing scale for more sustainable AI.

- Why this is important: Significant energy usage and potential carbon emissions from training and deploying state-of-the-art AI models due to massive demand for hardware accelerators.

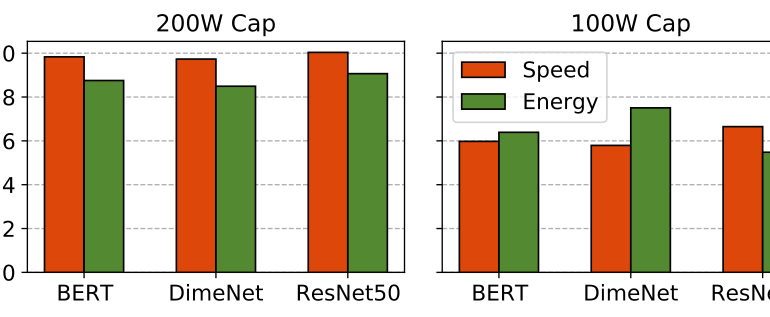

- What the research proposes: Studied the effect of power-capping GPUs to demonstrate significant decreases in temperature and power draw, suggesting a way to reduce power consumption and potentially extend hardware lifespan with minimal job performance impact.

- Results: Showed that power-capping can effectively reduce temperature and power draw. However, it also noted the possibility of an overall negative impact on energy consumption if users compensate for perceived job performance degradation with additional GPU jobs.

Technical Details

Technological frameworks used: Detailed analysis conducted at a research supercomputing center.

Models used: State-of-the-art models in NLP, computer vision, etc., requiring AI hardware acceleration.

Data used: Aggregate effect of power-capping on GPU temperature and power draw.

Potential Impact

GPU manufacturers, HPC/datacenter operators, and companies in sectors reliant on large-scale AI deployments could be directly affected or could benefit from adopting sustainable practices highlighted by this paper.

Want to implement this idea in a business?

We have generated a startup concept here: EcoTech AI.

Leave a Reply