Authors: Andrew Blair-Stanek, Nils Holzenberger, Benjamin Van Durme

Published on: November 16, 2023

Impact Score: 7.4

Arxiv code: Arxiv:2311.09693

Summary

- What is new: Fine-tuning smaller LLMs leads to near-perfect performance on legal text handling tasks, demonstrating foundational LLMs lack certain domain-specific behaviors without expert intervention.

- Why this is important: Leading LLMs like GPT-4 perform poorly at basic legal text tasks expected of them in zero-shot scenarios.

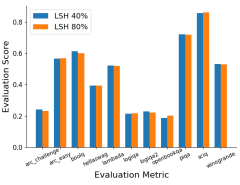

- What the research proposes: The introduction of a benchmark for legal text tasks and fine-tuning LLMs on these tasks to improve performance.

- Results: Fine-tuned LLMs achieved near-perfect performance on the benchmark, significantly improving their usability for legal practice.

Technical Details

Technological frameworks used: Fine-tuning methodologies for LLMs

Models used: GPT-4, Claude, PaLM 2

Data used: Legal texts including witness depositions and contract subsections

Potential Impact

Legal tech companies, law firms, paralegal services

Want to implement this idea in a business?

We have generated a startup concept here: LegalAssistAI.

Leave a Reply