Authors: Xingpeng Sun, Haoming Meng, Souradip Chakraborty, Amrit Singh Bedi, Aniket Bera

Published on: February 05, 2024

Impact Score: 8.3

Arxiv code: Arxiv:2402.03494

Summary

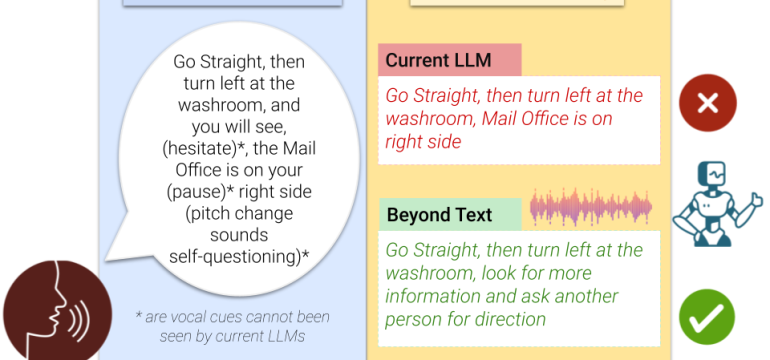

- What is new: ‘Beyond Text’ integrates audio features into LLMs for human-robot interaction, enhancing decision-making.

- Why this is important: Text-based LLMs fail to capture nuances in human-robot interactions, leading to trust issues.

- What the research proposes: An approach that includes audio transcription and paralinguistic features along with text for better interaction.

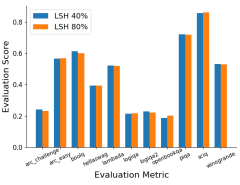

- Results: ‘Beyond Text’ outperforms existing LLMs with a 70.26% winning rate and shows greater resistance to adversarial attacks.

Technical Details

Technological frameworks used: nan

Models used: Large Language Models (LLMs) with audio feature integration

Data used: Audio transcriptions, paralinguistic features

Potential Impact

Social robot navigation, AI system developers, human-robot interaction platforms

Want to implement this idea in a business?

We have generated a startup concept here: SentiBotics.

Leave a Reply