Authors: Shivanshu Shekhar, Tanishq Dubey, Koyel Mukherjee, Apoorv Saxena, Atharv Tyagi, Nishanth Kotla

Published on: January 29, 2024

Impact Score: 8.22

Arxiv code: Arxiv:2402.01742

Summary

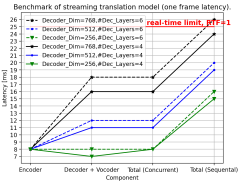

- What is new: A new method to optimize the usage costs of LLMs by predicting their output quality and using an optimization routine for LLM selection, incorporating quality, latency, and cost.

- Why this is important: High costs and varying performance of different LLMs in document processing tasks.

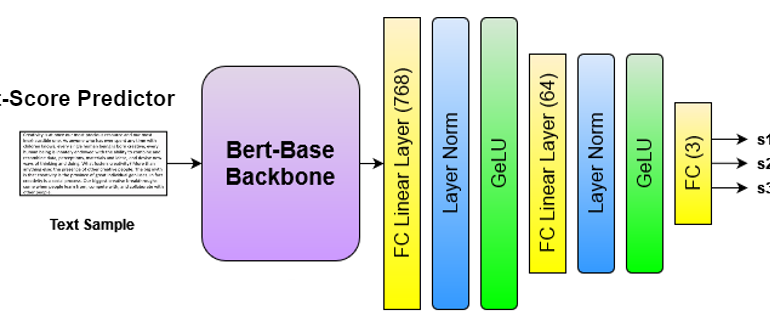

- What the research proposes: A predictive model for LLM output quality, an LP rounding algorithm for LLM selection optimization, and sentence simplification and deterministic heuristics for token reduction.

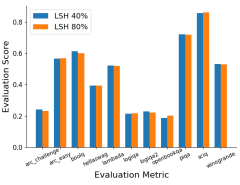

- Results: Reduction in costs by 40%-90% and improvement in quality by 4%-7%, using both enterprise and open-source datasets.

Technical Details

Technological frameworks used: LP rounding optimization, sentence simplification models, deterministic heuristics

Models used: Predictive models for LLM output quality

Data used: Enterprise datasets, open-source datasets annotated for this study

Potential Impact

Companies heavily utilizing LLMs for document processing, AI service providers, enterprises focusing on cost-efficient AI deployments

Want to implement this idea in a business?

We have generated a startup concept here: AI CostOptim.

Leave a Reply